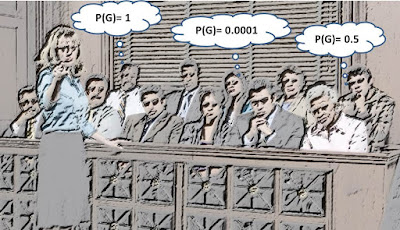

One of the greatest impediments to the use of probabilistic reasoning in legal arguments is the difficulty in agreeing on an appropriate prior probability that the defendant is guilty. The 'innocent until proven guilty' assumption technically means a prior probability of 0 - a figure that (by Bayesian reasoning) can never be overturned no matter how much evidence follows. Some have suggested the logical equivalent of 1/N where N is the number of people in the world. But this probability is clearly too low as N includes too many who could not physically have committed the crime. On the other hand the often suggested prior 0.5 is too high as it stacks the odds too much against the defendant.

Therefore, even strong supporters of a Bayesian approach seem to think they can and must ignore the need to consider a prior probability of guilt (indeed it is this thinking that explains the prominence of the 'likelihood ratio' approach discussed so often on this blog).

New work - presented at the 2017 International Conference on Artificial Intelligence and the Law (ICAIL 2017) - shows that, in a large class of cases, it is possible to arrive at a realistic prior that is also as consistent as possible with the legal notion of ‘innocent until proven guilty’. The approach is based first on identifying the 'smallest' time and location from the actual crime scene within which the defendant was definitely present and then estimating the number of people - other than the suspect - who were also within this time/area. If there were n people in total, then before any other evidence is considered each person, including the suspect, has an equal prior probability 1/n of having carried out the crime.

The method applies to cases where we assume a crime has definitely taken place and that it was committed by one person against one other person (e.g. murder, assault, robbery). The work considers both the practical and legal implications of the approach and demonstrates how the prior probability is naturally incorporated into a generic Bayesian network model that allows us to integrate other evidence about the case.

Full details:

Fenton, N. E., Lagnado, D. A., Dahlman, C., & Neil, M. (2017). "The Opportunity Prior: A Simple and Practical Solution to the Prior Probability Problem for Legal Cases". In International Conference on Artificial Intelligence and the Law (ICAIL 2017). Published by ACM. Pre-publication draft.See also

- Confusion over the Likelihood ratio

- The likelihood ratio and its use in the 'grooming gangs' news story

- Problems with the Likelihood Ratio method for determining probative value of evidence: the need for exhaustive hypotheses

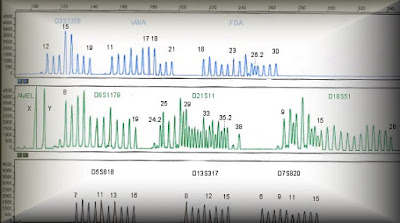

- Problem with likelihood ratio for DNA mixture profiles

- Misleading DNA evidence

- Barry George case: new insights on the evidence